Deep learning for sequential data has evolved from handling time series and language modeling to enabling intelligent decision-making systems through reinforcement learning. While traditional supervised learning focuses on static datasets, reinforcement learning operates in dynamic environments where agents learn by interacting with data sequences over time.

In my previous article on music generation, I explained how sequential deep learning models like recurrent neural networks and transformers can generate meaningful outputs based on patterns in sequences.

This article builds on that foundation by exploring how sequential learning techniques power reinforcement learning systems, where decisions are made step by step, influenced by previous states and rewards.

For executives and AI architects, this shift is critical. Reinforcement learning is not just an academic concept, it is driving innovation in robotics, autonomous systems, finance optimization, and personalized user experiences.

What is Reinforcement Learning in the Context of Sequential Data?

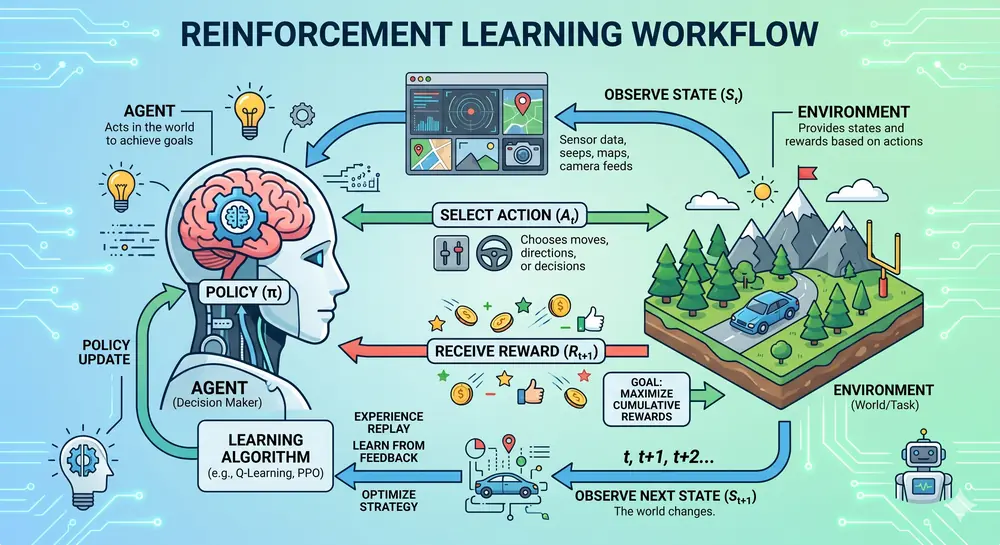

Reinforcement learning is a paradigm where an agent learns to make decisions by interacting with an environment. At each step, the agent observes a state, takes an action, and receives a reward. The goal is to maximize cumulative reward over time.

This process is inherently sequential because each decision influences future states and outcomes.

Key components include:

- Agent — the decision maker

- Environment — the system the agent interacts with

- State — the current situation

- Action — the choice made by the agent

- Reward — feedback from the environment

Unlike supervised learning, there is no labeled dataset. Instead, the system learns from experience.

Why Sequential Deep Learning is Essential for Reinforcement Learning

Sequential data models are critical in reinforcement learning because:

- Decisions depend on history — Past actions influence future outcomes

- Context evolves over time — States change dynamically

- Long-term dependencies matter — Actions have delayed effects

Traditional reinforcement learning relied on tabular methods. Modern systems use deep neural networks to approximate policies and value functions, enabling scalability.

Key deep learning architectures include:

- Recurrent Neural Networks — useful for capturing temporal dependencies

- Long Short-Term Memory networks — effective for long sequences

- Transformers — increasingly used for sequence modeling and decision making

- Convolutional networks — used in visual environments

Types of Reinforcement Learning Approaches

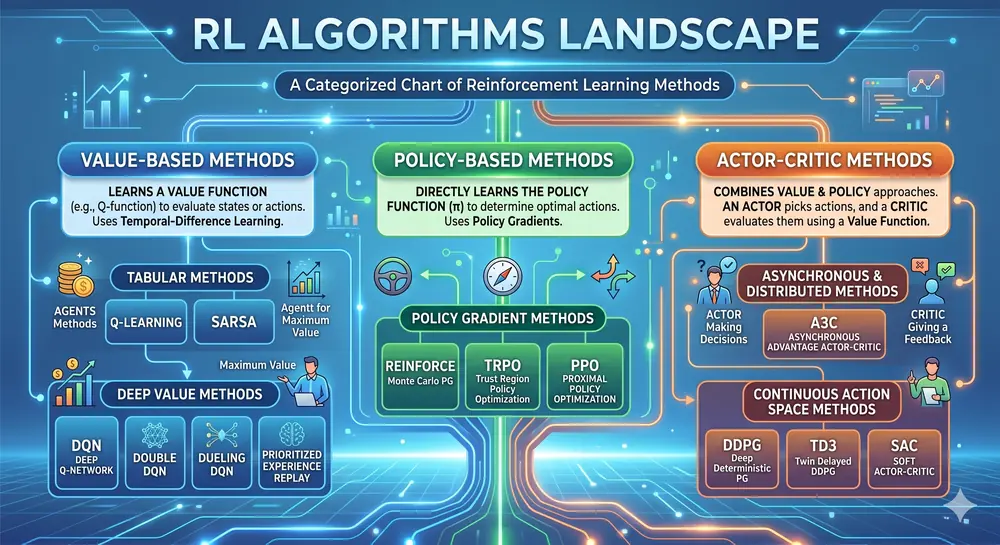

Value-Based Methods

These methods estimate the value of actions and choose the best one. Deep Q Networks are a popular example.

Use cases:

- Game playing

- Resource allocation

- Trading and portfolio optimization

Policy-Based Methods

These directly optimize the policy, which maps states to actions.

Use cases:

- Robotics

- Continuous control systems

- Natural language processing

Actor-Critic Methods

These combine value and policy approaches for better stability and performance.

Use cases:

- Autonomous driving

- Industrial automation

- Real-time decision systems

Real-World Applications Driving Business Value

Autonomous Systems

Self-driving cars rely on reinforcement learning to make sequential decisions in real time. These systems process sensor data streams and adapt continuously.

Recommendation Systems

Streaming platforms and e-commerce use reinforcement learning to personalize user experiences based on sequential behavior patterns.

Financial Trading

Reinforcement learning models analyze time series data to optimize trading strategies and portfolio management.

Robotics and Manufacturing

Industrial robots use reinforcement learning for adaptive control, improving efficiency and reducing downtime.

Healthcare

Treatment planning and drug discovery benefit from sequential decision modeling.

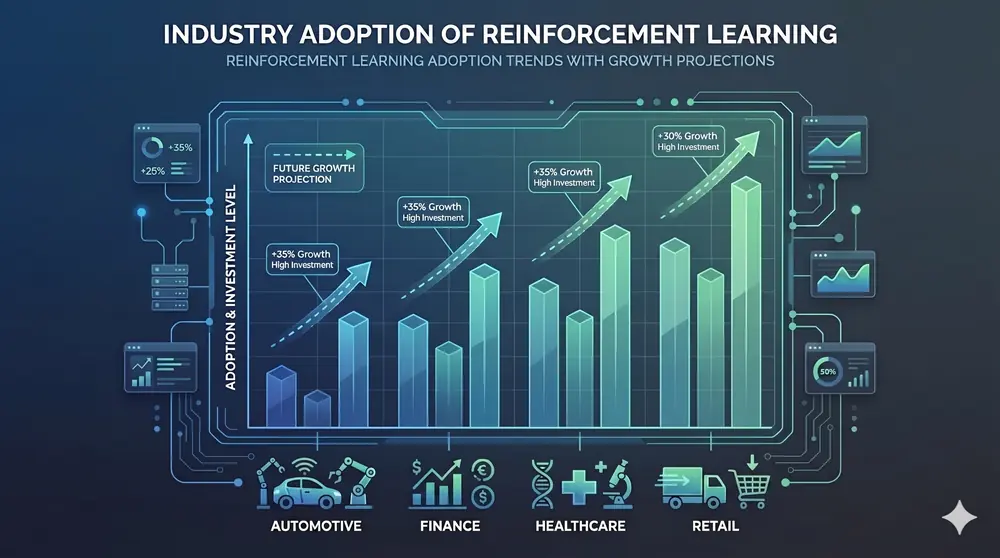

Data Insights and Industry Trends

The global reinforcement learning market is growing rapidly due to increased adoption in automation and AI-driven decision systems.

Key insights:

- Companies using reinforcement learning report up to 30% improvement in operational efficiency

- AI-driven recommendation systems increase user engagement by over 40%

- Autonomous systems reduce human intervention by significant margins

- Organizations investing in sequential deep learning gain a competitive advantage in predictive and adaptive intelligence

Linking Sequential Models with Reinforcement Learning

From my previous article on music generation, sequential models learn patterns to predict the next element in a sequence. In reinforcement learning, this concept extends to predicting optimal actions over time.

For example:

- Music generation predicts the next note

- Reinforcement learning predicts the next best action

Both rely on understanding sequence dependencies.

Transformers are now bridging the gap by enabling sequence-to-sequence decision making, making them highly relevant for reinforcement learning applications.

Modern Architectures Transforming Reinforcement Learning

Deep Q Networks

- Use neural networks to approximate Q-values

- Effective for discrete action spaces

Proximal Policy Optimization

- Balances exploration and exploitation

- Widely used in production systems

Transformer-Based RL

- Applies attention mechanisms to sequential decision making

- Handles long-term dependencies efficiently

Challenges in Reinforcement Learning

Despite its potential, reinforcement learning comes with challenges:

- Data inefficiency — Training requires large amounts of interaction data

- Exploration vs exploitation trade-off — Balancing learning new strategies with using known ones

- Reward design — Poor reward functions lead to suboptimal behavior

- Scalability — High computational cost

These challenges require careful system design and robust engineering practices.

Best Practices for Implementation

- Start with simulation environments

- Use transfer learning to reduce training time

- Design meaningful reward functions

- Monitor performance continuously

- Combine supervised learning with reinforcement learning when possible

For enterprises, building a scalable infrastructure is critical.

Future of Reinforcement Learning and Sequential AI

The future of reinforcement learning is closely tied to advancements in deep learning and compute power.

Emerging trends include:

- Integration with large language models

- Multi-agent reinforcement learning

- Real-time adaptive systems

- Edge AI deployment

As AI systems become more autonomous, reinforcement learning will play a central role in decision intelligence.

Key Takeaways

- Reinforcement Learning is Sequential by Nature — Decisions depend on temporal patterns and past states

- Deep Learning Enables Scale — Neural networks approximate complex policies and value functions

- Real-World Impact — Autonomous systems, recommendations, trading, and robotics are driving adoption

- Strategic Advantage — Organizations investing in RL gain competitive intelligence capabilities

- Future is Autonomous — As systems become more intelligent, RL-based decision making becomes essential

As AI continues to evolve, the ability to make intelligent, adaptive decisions in dynamic environments becomes a competitive necessity. Reinforcement learning and sequential deep learning are at the forefront of this transformation.