Transfer learning has revolutionized computer vision. Projects that once required months and millions in resources now deploy production systems in weeks with a fraction of the cost. Rather than training models from scratch, we leverage pretrained networks like ImageNet—already trained on 14 million images—to accelerate specialized vision tasks.

This builds on my previous explorations of transfer learning fundamentals and fine-tuning strategies. This article dives into practical architectures, implementation patterns, and deployment strategies leading technology companies use to build competitive vision systems.

Why Transfer Learning Transformed Computer Vision

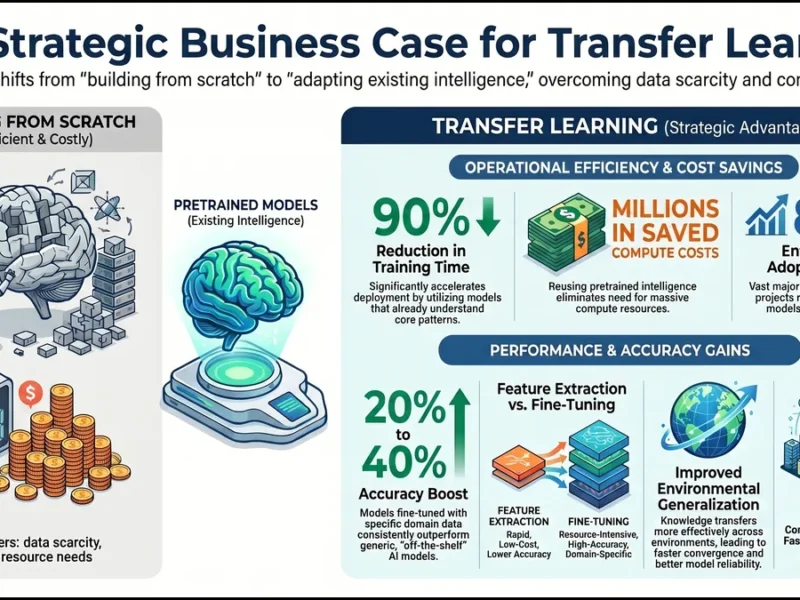

Pre-transfer learning, building custom vision systems required massive datasets, months of training, and infrastructure investments exceeding a million dollars. Transfer learning changed everything.

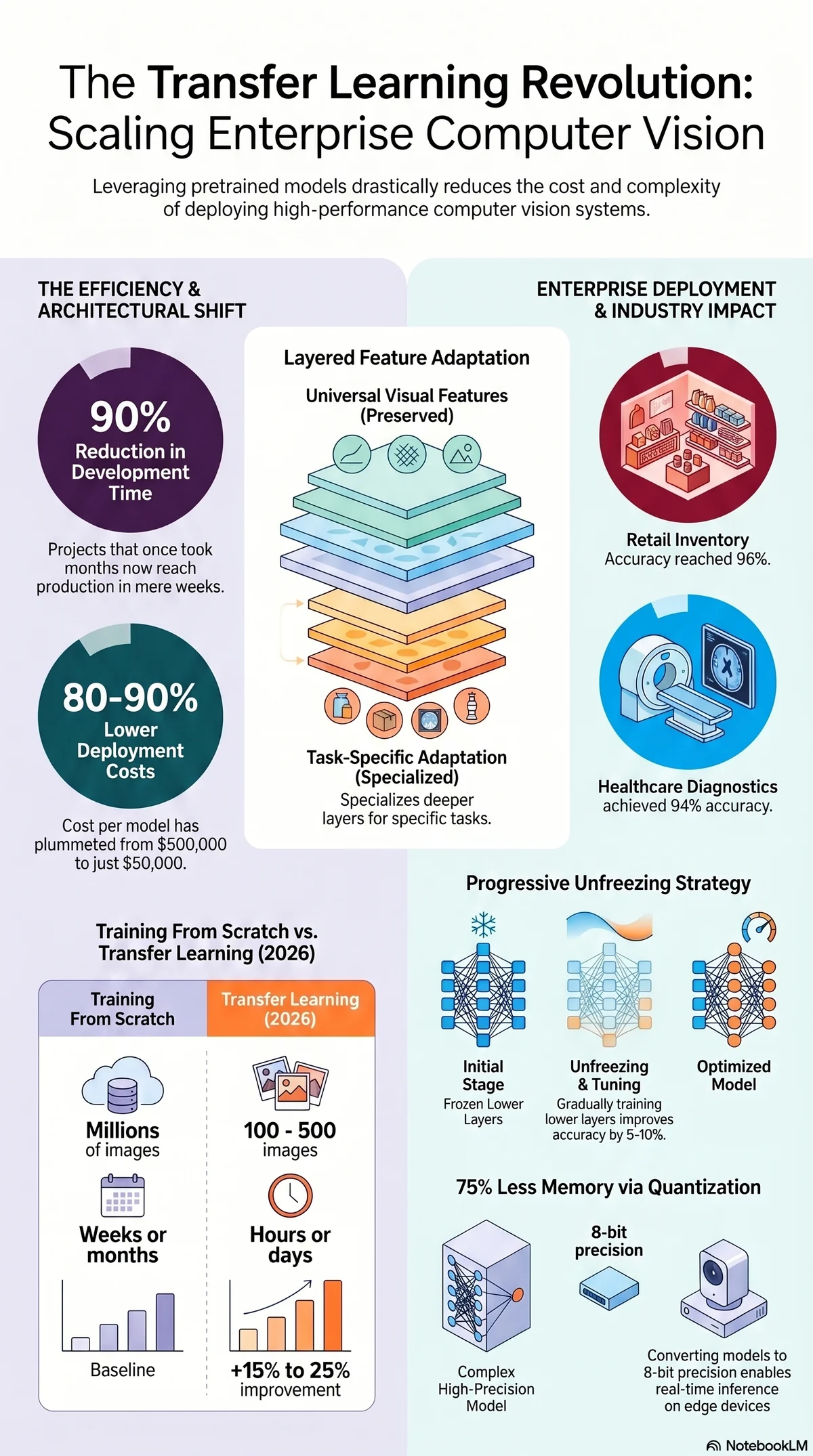

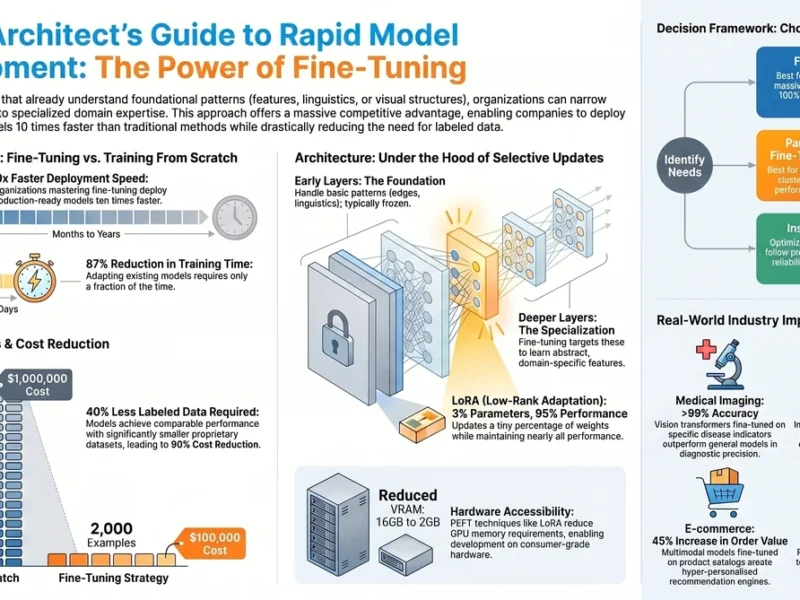

By starting with ImageNet pretrained models, organizations reduced labeled data requirements from millions to hundreds, training time from weeks to days, and costs from hundreds of thousands to thousands of dollars. 2026 benchmarks show 90% reduction in development time, 15-25% accuracy improvements, and per-model costs dropping from $500,000 to $50,000.

Transfer learning is now dominant. Google, Meta, Microsoft, and Amazon publish pretrained models specifically designed for downstream adaptation. Training from scratch has become rare in production environments.

The Architecture of Vision Transfer Learning

Convolutional neural networks process images through layers extracting progressively abstract features: early layers detect edges and colors, middle layers recognize textures and shapes, deeper layers identify complex objects. Transfer learning preserves early layers’ universal visual features and adapts deeper layers to your specific task.

Modern architectures include Vision Transformers (ViTs), which treat images as patch sequences, enabling better scaling and generalization. The adaptation depth depends on domain similarity, similar domains need minimal adaptation, while specialized domains like medical imaging require extensive fine-tuning.

Advanced practitioners use auxiliary networks, forcing models to learn generalizable representations rather than task-specific quirks, improving accuracy by 10-15%.

Practical Transfer Learning Strategies for Enterprise Vision

Enterprise vision projects face production reliability, real-time inference, budget, and integration constraints. Key strategic decisions:

Model Selection: ResNet provides proven performance for general tasks, EfficientNet excels at edge deployment, YOLO/Faster R-CNN dominate detection, ViT models work well with limited labeled data. Leading companies maintain internal pretrained models trained on proprietary data for domain-adapted foundations.

Fine-tuning Depth: Shallow fine-tuning (top layers only) requires less data but may leave accuracy on the table. Deep fine-tuning improves accuracy but demands more resources. Progressive unfreezing, gradually training lower layers with reduced learning rates—yields 5-10% improvements over simultaneous training, especially with limited data.

Regularization: Mixup (training on random combinations of images) and CutMix (replacing patches) prevent overfitting on small datasets.

Real-World Vision Applications

Autonomous Vehicles: Waymo and Tesla fine-tune pretrained detection models on proprietary driving data, enabling rapid deployment of new vehicle perception systems.

Retail: Object detection models reduce inventory loss by 40% and improve stock accuracy from 87% to 96% using transfer learning on product catalogs.

Healthcare: A radiology center achieved 94% pneumonia detection accuracy fine-tuning on just 500 labeled X-rays—training from scratch would require 50,000 images.

Agriculture: Fine-tuned models detect crop disease early, reducing losses by 25-35% and optimizing irrigation and resource allocation.

Manufacturing: Quality control systems detect subtle defects invisible to human inspectors, improving product quality and reducing warranty costs.

The pattern is consistent: transfer learning accelerates time-to-value, reduces data requirements, and enables rapid iteration on specialized problems.

Data Preparation and Labeling

Data quality determines success more than quantity. You typically need 100-500 labeled examples for meaningful improvements, depending on task complexity and domain similarity.

Critical steps:

– Preprocessing: Normalize images to the model’s expected format—incorrect normalization destroys pretraining benefits

– Augmentation: Random rotation, brightness, and cropping generate variations that prevent overfitting with limited data

– Quality Control: 500 perfectly labeled examples outperform 5,000 with 10% error. Use multiple annotators and train only on high-confidence examples

– Expert Annotation: Medical imaging requires radiologist-quality labels. One hospital achieved 97% pneumonia detection on just 1,000 expert-annotated examples.

Implementation Frameworks and Tools

- PyTorch: Industry standard with hundreds of pretrained models

- Hugging Face Transformers: Pre-configured transfer learning pipelines enabling experimentation in minutes

- Timm: 600+ pretrained vision models for rapid architecture comparison

- MMDetection/MMSegmentation: Specialized frameworks for detection, segmentation, and tracking

- Cloud Platforms: Google Cloud Vision API, AWS SageMaker, Azure ML provide pretrained models and managed infrastructure

Advanced Techniques

- Domain Adaptation: Gradually transitions models from source to target domain while preserving learned features

- Meta-Learning: Enables rapid adaptation with minimal data (few-shot learning)

- Knowledge Distillation: Compresses large models into smaller ones for edge deployment

- Neural Architecture Search: Automates optimal architecture selection for specific constraints

- Continual Learning: Incrementally adapts models to new data without forgetting previous knowledge

Deployment and Production Considerations

Moving to production requires:

- Model Serving: GPU infrastructure, efficient loading, and intelligent batching for throughput

- Containerization: Docker packages models with dependencies; Kubernetes enables scaling across compute clusters

- Real-time Optimization: Model quantization (8-bit integers) reduces memory by 75% and speeds inference 4x with minimal accuracy loss

- Edge Deployment: Knowledge distillation creates tiny models for cameras, robots, and mobile devices

- Monitoring: Automated systems detect performance degradation and trigger continuous retraining pipelines

Common Pitfalls

- Domain Shift: Models perform well on ImageNet but fail in production. Mitigate with data selection matching production distribution

- Class Imbalance: Models predict majority class when data is imbalanced. Use weighted sampling and focal loss

- Catastrophic Forgetting: Models lose pretrained capabilities during fine-tuning. Use careful learning rates and regularization

- Overfitting: Models memorize small datasets. Use aggressive augmentation, early stopping, and dropout

Best Practices

- Maintain Model Libraries: Standardize on shared pretrained models and fine-tuning utilities for consistent, accelerated development

- Rigorous Evaluation: Test robustness to transformations, generalization to underrepresented groups, and edge cases—not just accuracy

- Version Everything: Document which pretrained model, data, and hyperparameters produced each version to understand performance gains

- Plan for Continuous Improvement: Deploy as baseline, collect production failures, retrain on expanded data, iterate—treat models as evolving systems

The Future

Foundation Models: Trained on billions of images, these will enable faster deployment and superior performance than ImageNet-based transfer learning.

Multimodal Learning: CLIP models trained on image-text pairs enable more intuitive adaptation to new concepts while maintaining efficiency.

Synthetic Data: 3D models and simulation environments generate unlimited labeled data. Transfer learning from synthetic to real data achieves strong performance despite simulation gaps.

Conclusion

Transfer learning has fundamentally transformed enterprise vision system development. Organizations now deploy production-quality models faster and cheaper than ever before.

The question is not whether to use transfer learning, but which pretrained foundation and fine-tuning strategy best fits your constraints. Organizations that master these decisions lead in computer vision innovation.

Start with established pretrained models, implement rigorous evaluation, monitor production performance, and iterate continuously. This approach, rooted in proven best practices, positions organizations for sustained competitive advantage.

Share your computer vision transfer learning challenges and successes in the comments below. What domain adaptations have you found most effective? What pitfalls have you encountered? Subscribe to stay updated on the latest advances in AI architecture and enterprise vision systems. Together, we can advance the practice of transfer learning for computer vision.