Artificial Intelligence is no longer about building everything from scratch. The most successful AI systems today are built on top of existing intelligence. This is where Transfer Learning and Pretrained Models redefine how enterprises approach machine learning.

If you have explored my previous article on Reinforcement Learning for Sequential Data, you already understand how AI systems learn from interaction and feedback loops. Transfer Learning complements that journey by enabling models to reuse knowledge learned from one domain and apply it effectively to another. Together, they form a powerful toolkit for modern AI architects designing scalable and cost-efficient systems.

This article breaks down Transfer Learning concepts in a way that is practical, business-aligned, and technically grounded. It focuses on real-world application, performance impact, and future relevance.

What is Transfer Learning

Transfer Learning is a machine learning technique where a model trained on one task is reused as the starting point for a different but related task.

Instead of training a model from scratch, which requires massive datasets and compute resources, organizations leverage pretrained models that already understand patterns such as language, images, or signals.

This approach significantly reduces training time, cost, and data dependency while improving model performance.

Simple Analogy

Think of a professional pianist learning a new instrument like the violin. The understanding of music theory, rhythm, and composition transfers. Only the instrument-specific nuances need to be learned.

That is exactly how Transfer Learning works in AI.

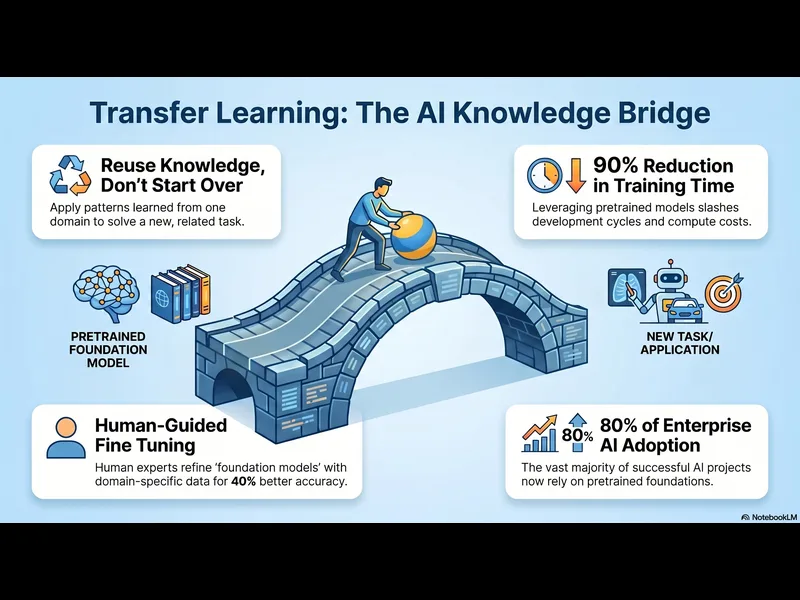

Why Transfer Learning Matters in 2026

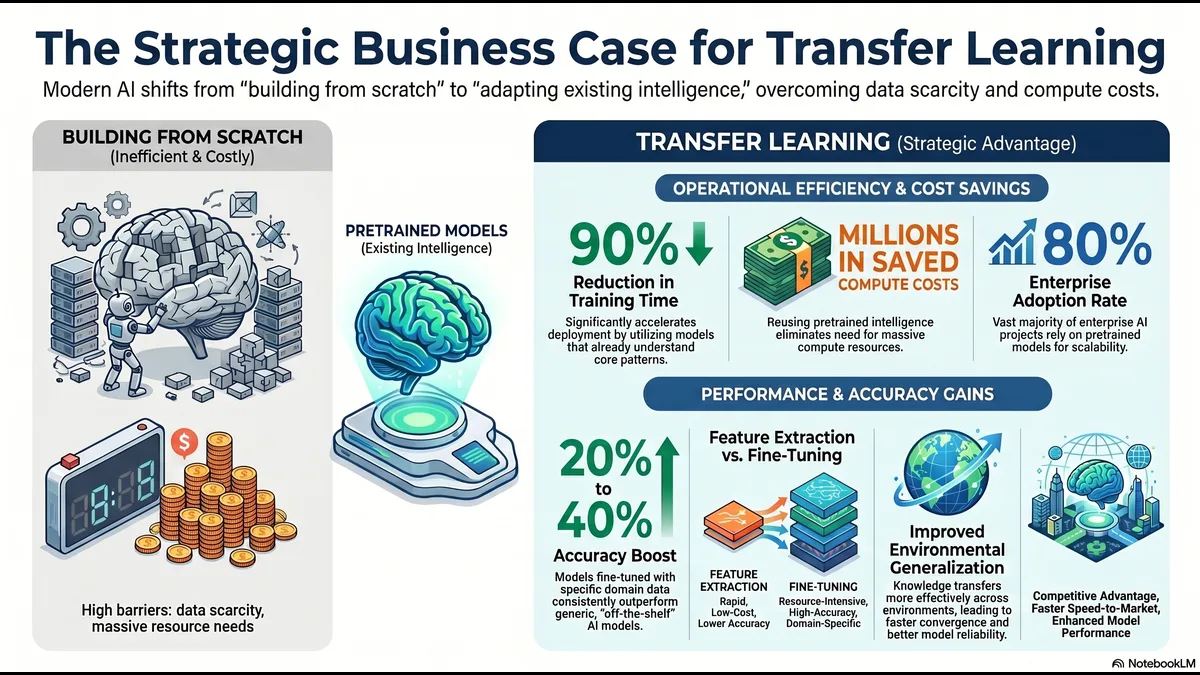

AI adoption is accelerating across industries, but data scarcity and compute cost remain major challenges.

Transfer Learning addresses both.

Key Industry Data

- Over 80 percent of enterprise AI projects now rely on pretrained models

- Training large models from scratch can cost millions of dollars

- Transfer Learning can reduce training time by up to 90 percent

- Models fine-tuned with domain data outperform generic models by 20 to 40 percent in accuracy

These numbers highlight a critical shift. AI is moving from model creation to model adaptation.

Types of Transfer Learning

Understanding the different types of Transfer Learning helps in selecting the right strategy.

1. Inductive Transfer Learning

- The source and target tasks are different, but the knowledge gained helps improve learning.

- Example

- Using an image recognition model trained on general objects to detect medical anomalies.

2. Transductive Transfer Learning

- The task remains the same, but the data distribution changes.

- Example

- Adapting a sentiment analysis model trained on movie reviews to product reviews.

3. Unsupervised Transfer Learning

- Used when labeled data is not available in the target domain.

- Example

- Clustering customer behavior using embeddings learned from large datasets.

Pretrained Models, The Foundation of Modern AI

Pretrained models are models trained on massive datasets and made available for reuse.

These models act as a foundation for building specialized applications.

Popular Categories of Pretrained Models

Natural Language Processing

- Language models trained on billions of words

- Used for chatbots, summarization, translation

Computer Vision

- Models trained on millions of images

- Used for object detection, facial recognition, medical imaging

Audio and Speech

- Models trained on speech datasets

- Used for transcription, voice assistants

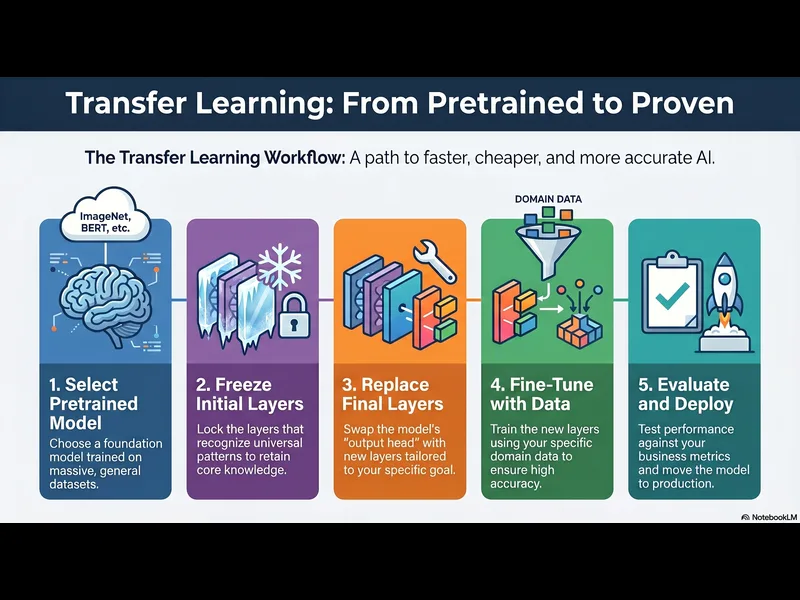

Infographic, Transfer Learning Workflow

Workflow Explanation

- Select a pretrained model

- Freeze initial layers to retain learned knowledge

- Replace final layers based on new task

- Fine-tune using domain-specific data

- Evaluate and deploy

Feature Extraction vs Fine Tuning

Two core strategies define how Transfer Learning is implemented.

Feature Extraction

- The pretrained model acts as a fixed feature extractor. Only the final layers are trained.

- Use Case

- Small datasets, limited compute resources

Fine Tuning

- The entire model or parts of it are retrained on new data.

- Use Case

- High accuracy requirements, domain-specific applications

Key Insight

Feature extraction is faster and cheaper, fine tuning is more accurate but resource-intensive.

Real World Use Cases Driving Adoption

Transfer Learning is not theoretical. It is powering real business outcomes.

Healthcare

- Pretrained vision models detect diseases from X-rays and MRIs

- Reduced need for large labeled medical datasets

Finance

- Fraud detection systems adapt quickly to new patterns

- Risk modeling improves using pretrained behavioral data

Retail and E-commerce

- Recommendation engines leverage pretrained embeddings

- Customer segmentation becomes more accurate

Autonomous Systems

- Self-driving models reuse knowledge across environments

- Reinforcement Learning integrates with Transfer Learning for continuous improvement

This is where the connection to my previous article becomes important. Reinforcement Learning systems can use pretrained models as starting points, reducing exploration time and improving policy learning efficiency.

Transfer Learning and Reinforcement Learning, A Strategic Link

In my earlier article on Reinforcement Learning for Sequential Data, I discussed how agents learn through trial and error.

Transfer Learning enhances this by providing a strong initial policy or representation.

Combined Benefits

- Faster convergence

- Reduced training cycles

- Better generalization across environments

Example

A robotics system trained in simulation can transfer knowledge to real-world operations, minimizing risk and cost.

Infographic, Feature Extraction vs Fine Tuning

Challenges and Limitations

Transfer Learning is powerful but not without challenges.

Domain Mismatch

- If the source and target domains are too different, performance may degrade.

Negative Transfer

- In some cases, transferred knowledge can harm performance.

Data Bias

- Pretrained models may carry biases from training data.

Model Complexity

- Large pretrained models require careful optimization for deployment.

Best Practices for AI Leaders

To maximize the value of Transfer Learning, organizations should follow structured practices.

Choose the Right Model

- Select models aligned with your domain.

Evaluate Data Similarity

- Ensure the source and target domains are reasonably related.

Start with Feature Extraction

- Use it as a baseline before moving to fine tuning.

Monitor Performance Continuously

- Track accuracy, latency, and business metrics.

Optimize for Deployment

- Use model compression and quantization where needed.

Future Trends in Transfer Learning

The next wave of AI innovation will heavily rely on Transfer Learning.

Foundation Models

- Large-scale models that serve multiple tasks across domains.

Multimodal Learning

- Combining text, image, and audio knowledge in a single model.

Low Code AI Platforms

- Making Transfer Learning accessible to non-experts.

Edge AI

Deploying fine-tuned models on devices with limited compute.

Key Takeaways

Transfer Learning is not just a technique, it is a strategic advantage.

- Reduces cost and time significantly

- Enables high performance with limited data

- Accelerates AI adoption across industries

- Complements Reinforcement Learning and other AI paradigms

Organizations that embrace Transfer Learning will lead in AI innovation.